For more detailed information, we can use the help function of the R. We can see the character counts and metadata information in vcorpu. Using the above lines of code we can call the library.įor making a term-document matrix in R, we are using crude data which comes with the tm library and it is a volatile corpus of 20 news articles which are dealing with crude oil. Instead of term-document and document-term matrix, we have various facilities available in the library from the field of text mining and others. Using the above lines of codes, we can install the text mining library. For this purpose, we are required to install the tm(text mining) library in our environment. In this section of the article, we are going to see how we can create a term-document matrix using the R language. Since R and python are two common languages that are being used for the NLP, we are going to see how we can implement a term-document matrix in both of the languages. More formally we can say that it is the way to represent the relationship between words and sentences presented in the corpus. Term document matrices are one of the most common approaches which need to be followed during natural language processing and analyzing the text data. From this matrix, we can get the total number of occurrences of any word in the whole corpus and by analyzing them we can reach many fruitful results. The above table is a representation of the term-document matrix. The term-document matrix of these responses will look like this: Here, we can see a set of text responses. The dice under the matrix represent the number of occurrences of the words.

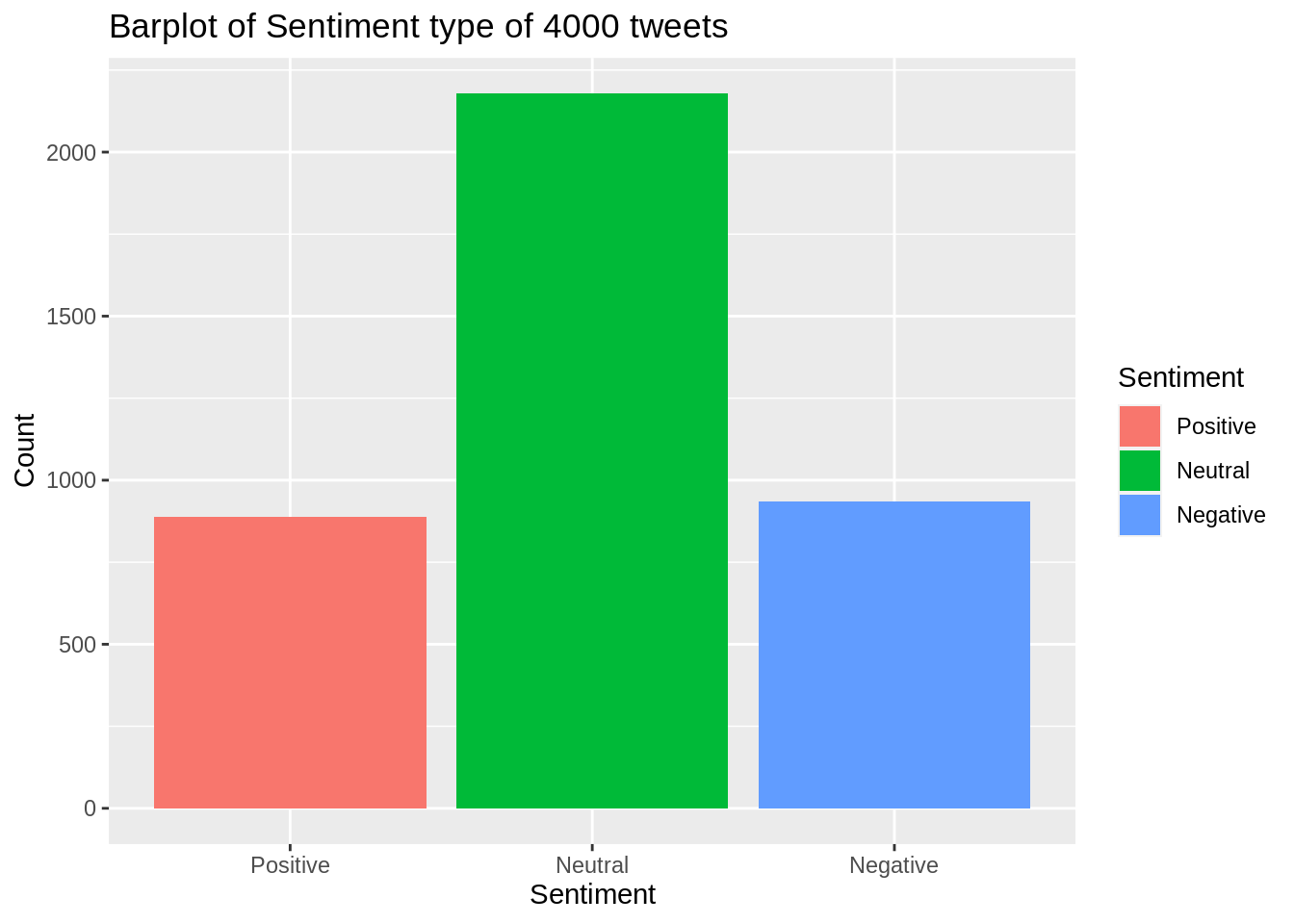

The rows of the matrix represent the sentences from the data which needs to be analyzed and the columns of the matrix represent the word. In this method, the text data is represented in the form of a matrix. Term document matrix is also a method for representing the text data. In natural language processing, we see many methods of representing text data. #Frequency word plots are here as followsīarplot(d25$freq, las=2, names.Let’s start the discussion by understanding what the term-document matrix is. Tdocs <- tm_map(tdocs, removePunctuation)Ī list of the 25 most commonly used words in Twitter dtm <- TermDocumentMatrix(tdocs) Tdocs <- tm_map(tdocs,removeWords, stopwords("english")) Tdocs <-tm_map(tdocs, content_transformer(tolower)) twitter_edited <- str_replace_all(string=twittersample, pattern= "", replacement= "")Ĭonvert to a corpus, and use the tm package to clean the texts tdocs<- Corpus(VectorSource(twitter_edited)) Special characters in Twitter & â €¦™ ð Ÿ ¥ were removed. The Twitter file size of 2360148 elements (read from the environment window), tfilePath <- "C:/Users/mlrob/Documents/en_US/en_US.twitter.txt" Load the text files from the Working Directory. Here is my code in R Markdown, which knitted just fine. Tdocs <- tm_map(tdocs, toSpace, <- tm_map(tdocs, toSpace, "<")įor some strange reason, the special characters that I deleted appear again in my analysis in the bi-grams and trigrams. ToSpace <- content_transformer(function(x, pattern) gsub(pattern, " ", x)) Tdocs <- Corpus(VectorSource(twittersample)) Twitter <- readLines(tfilePath, skipNul = TRUE) TfilePath <- "C:/Users/mlrob/Documents/en_US/en_US.twitter.txt" How can I eliminate unwanted characters but still get knitr to work? library(tm) I can't not remove in my project, however. When I commented out the removal of special characters, knitr worked fine. It worked successfully in r, but knitr refused to execute. I wish to remove some special characters from a text corpus.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed